Iguazio + NVIDIA EGX: Unleash Data Intensive Processing at the Intelligent Edge

Yaron Haviv | October 22, 2019

Nowadays everyone’s moving core IT services to the cloud and the incentive is clear. Why buy servers and software to manage your own CRM, ERP or Office systems when you can use cloud services like Salesforce or Office365?

Developers love the cloud. In just a few clicks they spin up a new VM or container and attach it to a scalable database as a service, object storage and API gateways. To think we used to wait for IT to buy, install and configure servers with all that software stack.

However, it is evident that with new applications, the explosion of intelligent devices and privacy constraints mandate data and computing at the edge. Maybe Peter Levine’s end of the cloud prophecy is overstated, but analysts agree that edge computing will become dominant.

For example, retail stores embed cameras and sensors to track customer purchases, provide real-time recommendations and monitor inventory levels, but face challenges as forwarding massive volumes of video and sensor data to the cloud for processing is not practical.

I’ll give you another example. In a large ship or oil rig, large amounts of data are collected from sensors and cameras which are used to detect critical failures. Short response times are needed, and internet connectivity cannot be relied on. The only practical solution is to have a “mini cloud” on board.

Many organizations collecting large amounts of data at the edge or dealing with sensitive data want to run machine learning models. There must be a way to seamlessly extend the cloud to the edge.

Limitations of Traditional Edge Solutions

So what’s the novelty? If edge gateways send sensor data to the cloud, isn’t my home set-top box an edge?

Edge gateways are great for applications which pass along data to the cloud with minimal local computation, but there are a growing number of applications which store larger amounts of local data and require significant computation power attached to them. In those applications moving data and computation to the cloud is not practical due to bandwidth, latency and data privacy constraints. This is also true for mission-critical applications that cannot rely on intermittent internet connectivity.

If all we need is more capacity or performance, why not just install a bunch of servers with VMs, attach traditional storage or use “hyper converged” to form a “private cloud”?

Most intelligent applications are developed and delivered in the cloud because of advanced cloud services for data management, stream processing, machine learning, identity management, serverless functions, etc. Businesses rarely take VMs and build their own stack from scratch, because it would require them to spend 90% of their time debugging and scaling infrastructure vs building their applications on pre-integrated data and AI cloud services. The need is for a cloud-like Intelligent Edge.

The edge cannot be treated as a silo. The same applications may be developed or tested in a cloud and “pushed” for deployment at the edge, multiple edge solutions may be centrally controlled by the cloud, and data needs to flow in between the cloud and the edge securely and seamlessly. This calls for an integrated stack which can extend from the cloud to the edge.

Edge locations may be constrained by space and power and traditional data center solutions are usually general purpose and inefficient. Therefore, an Intelligent Edge solution should leverage the computation density of GPUs coupled with dense NVMe/SSD Storage, provided as scalable appliance which requires minimal IT intervention and be centrally controlled from the cloud.

Introducing Iguazio’s GPU Powered Intelligent Edge

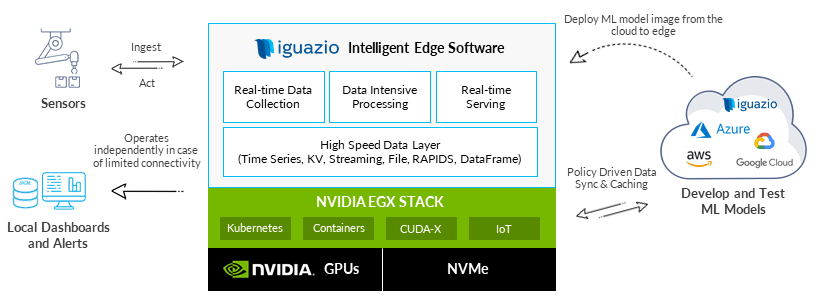

Iguazio’s Intelligent Edge solution powered by the NVIDIA EGX platform enables data and compute intensive processing at the edge with cloud usability and seamless cloud integration, allowing businesses to:

- Develop and deploy edge applications faster

- Maximize performance and scalability while consuming less space, power and IT labor

- Have the same development experience and tools in the cloud and at the edge

- Seamlessly and securely move workloads and data between the cloud and the edge

- Maximize the utilization of GPUs across data, machine learning and deep learning applications

- Focus on building applications without managing infrastructure or middleware

Iguazio provides an Intelligent Edge software stack which runs over high-density NVIDIA GPUs and NVMe flash equipped servers. The same stack can also run in the cloud or on-premises and is tightly integrated with services delivered by the three major cloud providers.

Multiple Intelligent Edge servers are clustered together using Kubernetes (Iguazio provided or certified third-party Kubernetes) and form a “micro-cloud” which is centrally controlled by the cloud.

Iguazio’s Intelligent Edge software comes with:

- High-performance managed data services (file, object, NoSQL, SQL, time series and messaging)

- Elastic serverless functions (Nuclio) for real-time event processing, data processing, machine learning, model and API serving

- Leading open-source frameworks (Spark, Dask, TensorFlow, Jupyter, Horovod, Presto, Prometheus, Grafana and more) packaged as a managed service

- Management services: Security and RBAC, monitoring, logging, remote software upgrades, automated workflows, remote control and automated data movement to/from the cloud

- Out of the box integration of NVIDIA GPUs and NVIDIA EGX stack: pre-integrated containers, NVIDIA Kubernetes enhancements, deep learning, vision support and NVIDIA RAPIDS for massively parallel data processing and machine learning.

Real-time AI and Analytics on Large, Complex Datasets

Iguazio’s Intelligent Edge processes extremely large and fast data feeds, contextualizes them with historical or operational data, runs AI algorithms and immediately acts. It runs microservices and serverless functions (Nuclio) with real-time access to data and extreme concurrency which allow rapid building of new intelligent applications without sacrificing performance.

Iguazio’s integrated real-time multi-model database supports simultaneous access to the same data through multiple open and standard APIs (SQL, NoSQL, Python Pandas, Spark Data-frames, streaming, object/file, Prometheus, Grafana time series APIs, etc.). It simplifies real-time pipelines in which different functions ingest, enrich, infer, act and visualize the same data.

The solution delivers GPU as a Service which maximizes resource sharing and utilization and unblocks data bottlenecks. It is integrated with NVIDIA RAPIDS for GPU-accelerated data analysis.

Continuous and Automated Deployment via Microservices and Serverless

Software distribution in large federated environments can be extremely complicated. Out of the box integration of serverless and microservices enables users to run and test software in the cloud and use automated CI/CD pipelines. Developers automatically (based on test results) or manually decide to deploy or upgrade the microservices on some or all of the edge locations (using labels) and the deployment is based on a descriptive model similar to Kubernetes (a user specifies the desired software versions and the system automatically reconciles through rolling upgrades or reverts back to the original version in case of failures).

Vertical Applications and Markets

GPU-powered Intelligent Edge powers customers in various fields to take advantage of a modern and self-service architecture for intelligent and federated cloud-to-edge solutions and overcome operational challenges. Some examples include: Manufacturing, Automotive, Healthcare, Smart Cities & Buildings, Retail, Financial, Oil & Gas, Telcos, Cybersecurity.