Blog: Build and Scale Data-Driven Operational ML Pipelines with Pure Storage and Iguazio

Technical blog by JB THOMAS, Principal Field Solutions Architect at Pure.

Iguazio and Pure Storage empower enterprises to take a production-first approach to AI/ML operationalization, with an AI/ML solution that delivers scalable production-grade AI/ML applications. With the right architecture and building blocks, companies can efficiently and cost-effectively support diverse and growing business needs. Using the Iguazio MLOps Platform on Pure Storage compute and storage infrastructure allows ML teams to continuously roll out new AI services, focusing on their business applications and not the underlying infrastructure.

Iguazio offers an MLOps platform that automates and accelerates the path to production. Its serverless and managed services approach shifts the focus from managing a sprawl of Kubernetes resources to delivering a set of higher-level MLOps services, over elastic resources, therefore reducing significant capital and operational costs, and accelerating time to market. It comes with a built-in feature store, real-time serving and model monitoring capabilities.

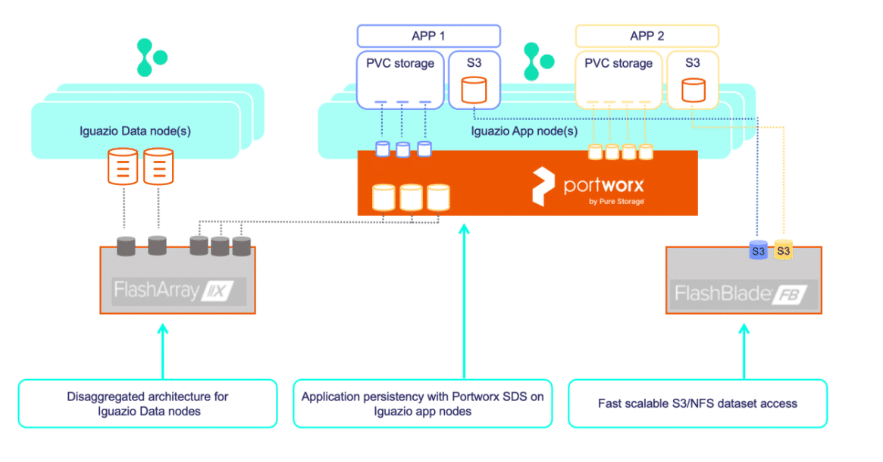

Pure Storage provides storage products that are optimal for the challenges of data science, providing speed and simplicity at scale. Unstructured data can be organized in files or objects (on Pure FlashBlade) and accessed directly by the applications). Iguazio’s real-time feature store can access structured data on high-performance, low-latency NVMe storage using Pure FlashArray. Pure Portworx native Kubernetes storage can be used to support the persistency needs of different stateful micro-services.

Using the joint Pure-Iguazio solution, enterprises can: